SBA’s Approach to Identifying Data, Using a Learning Agenda, and Leveraging Partnerships to Build its Evidence Base

Overview

Through its Enterprise Learning Agenda, Small Business Administration’s (SBA) staff identify essential research questions, a plan to answer them, and how data held outside the agency can help provide further insights. Other agencies can learn from the innovative ways SBA identifies data to answer agency strategic questions and adopt those aspects that work for their own needs.

Source

Details

Originally published August 2, 2019

The Small Business Administration’s (SBA) evaluation office employs cutting edge and creative approaches to access and use data in order to assess the agency’s programs and advance its strategic goals.

Through its Enterprise Learning Agenda, SBA staff identify essential research questions, a plan to answer them, and how data held outside the agency can help provide further insights. The SBA also emphasizes the use of administrative data, a rich resource that other agencies are also beginning to incorporate into evidence-based decision making. This approach generates insights into the agency’s operations, spurs valuable stakeholder engagement, and highlights how cost-effective short-term evaluations can provide fast answers that complement multi-year, large-scale impact analyses.

Other agencies can learn from the innovative ways SBA identifies data to answer agency strategic questions and adopt those aspects that work for their own needs.

SBA’s Enterprise Learning Agenda

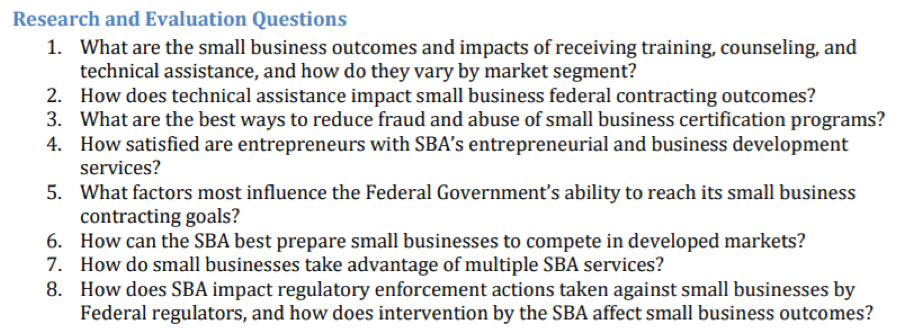

SBA’s FY 2018 Enterprise Learning Agenda (ELA) is organized around the agency’s four FY 2018 – 2022 Strategic Plan goals: (1) support small business revenue and job growth, (2) build healthy entrepreneurial ecosystems and create business friendly environments, (3) restore small businesses and communities after disasters, and (4) strengthen the SBA’s ability to serve small businesses. Within each of these strategic goals, the ELA gives a brief overview of what prior research revealed, then enumerates key research and evaluation questions. Finally, the ELA lays out several planned evaluations for the coming fiscal year designed to answer a subset of those questions.

The ELA goes on to describe how planned evaluations will help answer these questions using available data. The SBA has begun to identify datasets internal and external to the agency that can help answer these questions. During the scoping of an evaluation, the evaluation team asks what data are available and who manages those data. These questions start a conversation about the data, its history, and its quality. For example, the SBA began this conversation with the HUBZone program manager in 2017 to help improve program outcomes. The HUBZone program helps the Federal Government awards its prime contracts to HUBZone-certified businesses. The program evaluation informed the wider research questions and strategic goal by researching what factors contribute to agencies’ opportunities and challenges in meeting this goal, as well as the characteristics of small businesses that win these contracts.

The HUBZone evaluation was planned, like all evaluations laid out in the ELA, to help answer questions identified by senior leadership. In this case, it helps address the fifth research/evaluation question affiliated with the agency’s second strategic goal: what factors most influence the Federal Government’s ability to reach its small business contracting goals?

Identifying Data Needed to Answer Questions

SBA’s evaluation work shows that meaningful insights can be derived without the extensive use of randomized trials or surveys. Rather, ‘administrative data’ – the information created in the process of running a government program – may sometimes be leveraged to answer evaluation questions. In the case of the HUBZone evaluation, researchers were able to produce valuable results in a short timeframe by analyzing existing data in the Federal Procurement Data System (which tracks contract awards for federal agencies) and SBA’s own program data accrued in the administration of the HUBZone certification process.

Many administrative databases are optimized for the transaction between program officials and clients. While they may have report-generation functions, they are typically not easy to use for analysis. Arcane category codes, unique formatting, and heavily fragmented tables mean that analysts unfamiliar with the program’s administration face challenges turning the information into an analytical dataset. The evaluators of the HUBZone program were able to persevere thanks to frequent communication with the program office, which helped them learn how to extract, cross-walk, and ascribe meaning to the data extracts. It was also critical to partner with attorneys from the program who could convey the legal requirements of the program.

Combined with qualitative information from interviews with agency and program employees, analysis of these data was able to bring meaningful evidence to bear on 1) the HUBZone program’s improvement goals, 2) the question of what factors most influence the Federal government’s ability to reach its small business contracting goals, and 3) the goal of building healthy entrepreneurial ecosystems and creating business friendly environments.

The SBA’s FY 2019 update to the ELA recaps lessons learned through its FY 2018 evaluations and notes plans to build upon its work with the HUBZone program. In this way, the agency has pursued an agile approach to evaluating HUBZone and other programs; one that yields intermediate results and actionable insights.

“Often, once an evaluation is completed, it leads to other, more in-depth questions, that need to be answered. We keep finding more pieces to the puzzle. The evidence becomes clearer and evaluations are used to support decisions.”

— Jason Bossie (Director, Office of Program Performance, Analysis, and Evaluation)

Outside help

The HUBZone evaluation and similar efforts demonstrated that short-term, affordable evaluations can shed light on crucial evidence-related questions. There are, however, instances when questions linked with SBA’s strategic goals are unanswerable with limited internal resources. The SBA has built partnerships with stakeholders outside the agency to support its evaluations. Many research universities and other stakeholders with skill sets in research and evaluation can provide support.

“Leverage partnerships and identify groups and associations with an interest in your policies and programs. Many universities have specialized schools that focus on your policy areas. With these partnerships, data can be shared that benefits both organizations. Your agency can receive support to help answer key policy and programmatic questions while the researchers may be able to leverage an agency’s administrative data to answer key theoretical questions to support their research.”

— Jason Bossie (Director, Office of Program Performance, Analysis, and Evaluation)

Completing the circle

As the SBA’s evaluation work matures, it is increasingly working with program officials to change data collection and other upstream aspects of data creation, so that those data can facilitate more powerful downstream analysis and evaluation.

The SBA’s evaluation work has also led to investments in developing a data-driven culture in the rest of the agency and wider Federal government. The SBA similarly innovates in data sharing. It began partnering with the US Bureau of the Census to provide extracts of its loan data through the Federal Statistical Research Data Centers. Also in this vein, the SBA’s FY 2019 ELA update shared 30-plus Federal government administrative and employer datasets that proved valuable for SBA’s evaluation work and could help serve others working to develop evidence in related research areas.

Postscript

Visit www.sba.gov/evaluation to learn more about SBA’s Enterprise Learning Agenda and recently completed evaluations.

The Federal Data Strategy Incubator Project

The Incubator Project helps federal data practitioners think through how to improve government services, enabling the public to get the most out of federal data. This Proof Point and others will highlight the many successes and challenges data innovators face every day, revealing valuable lessons learned to share with data practitioners throughout government.

Guidance references

- FDS Practice 01 Identify Data Needs to Answer Key Agency Questions

- FDS Practice 04 Use Data to Guide Decision-Making

- FDS Practice 06 Convey Insights from Data

- FDS Practice 17 Recognize the Value of Data Assets

- FDS Practice 22 Identify Opportunities to Overcome Resource Obstacles

- FDS Principle 03 Promote Transparency

- FDS Principle 05 Harness Existing Data

- FDS Principle 08 Invest in Learning